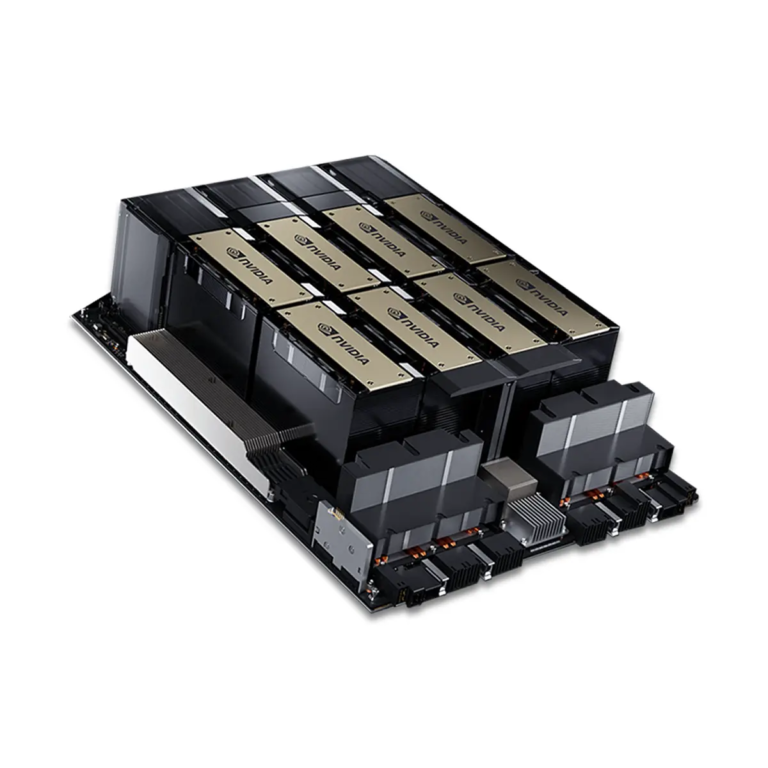

NVIDIA H100 8x80GB Baseboard

Price range: 10€ through 100€

Delivery is made within 14-21 days

The prices are presented for both corporate clients and individuals.

Pricing for

The prices are presented for both corporate clients and individuals.

NVIDIA H100 8×80GB Baseboard. 8×H100 80GB SXM for state-of-the-art LLM performance. Direct import, vendor warranty, pro packaging, fast door-to-door delivery, any payment.

Pairs well with

Product description

8×NVIDIA H100 80GB SXM GPU Baseboard: Ultimate AI and HPC Power

8×NVIDIA H100 SXM 80GB GPU Baseboard is a high-performance server platform featuring eight NVIDIA H100 GPUs with 80 GB of HBM3 memory each. Together, they provide an impressive 640 GB of ultra-fast memory and supercomputer-level compute power for the most demanding AI and high-performance computing (HPC) workloads.

Designed for modern data centers and AI clusters, this baseboard connects all GPUs via NVLink and NVSwitch, delivering inter-GPU bandwidths of up to hundreds of GB/s. Unlike PCIe-based setups, all accelerators operate as a unified compute fabric, eliminating bottlenecks and ensuring maximum scalability.

Specifications

- Series: Tesla

- GPU Architecture: Hopper

- Total Memory: 640 GB HBM3

- Memory per GPU: 80 GB HBM3

- CUDA Cores: 135,168

- Tensor Cores: 4,224 (4th Gen)

- Theoretical Performance: up to 535 TFLOPS (FP16/FP8 Tensor)

- Interconnect: NVLink + NVSwitch (up to 900 GB/s per GPU)

- Interface: PCIe 5.0 x16 (for baseboard integration)

- Form Factor: HGX SXM5

- Power Consumption: approx. 5600 W (8 GPUs combined)

- Cooling: Liquid-cooled or advanced air-cooled solutions (used in Cray, HPE, and OEM systems)

Advantages of Baseboard vs. Separate GPUs

- Unified architecture. All eight GPUs act as a single computational system — essential for training large language models (LLMs) that must fit entirely into memory.

- Extreme interconnect speed. NVSwitch enables direct GPU-to-GPU data exchange at up to hundreds of GB/s — far beyond the limits of PCIe.

- Infrastructure efficiency. Shared cooling, power, and communication modules significantly reduce energy usage and simplify integration.

- Cost optimization. In real-world training and inference time, SXM modules achieve higher ROI compared to multiple PCIe GPUs.

Applications

- AI and LLM training. Large-scale neural networks such as GPT, DeepSeek, Qwen, and Mistral.

- HPC simulations. Molecular dynamics, physics, energy systems, and climate modeling.

- Generative AI. Training multimodal and large generative models requiring high data throughput.

- Cloud infrastructure. Scalable GPU clusters for enterprise and research workloads.

Why Choose NVIDIA H100 80GB SXM Baseboard

- Eight H100 GPUs in one unit — providing supercomputer-class compute density.

- NVLink/NVSwitch architecture ensures performance impossible to achieve with PCIe solutions.

- OEM design offers DGX-equivalent architecture at a more accessible cost.

- Future-ready platform for scaling next-generation AI clusters and data centers.

8×NVIDIA H100 SXM 80GB GPU Baseboard is the foundation for next-generation AI infrastructure — delivering maximum speed, scalability, and reliability. Ideal for organizations developing advanced LLMs, generative AI, and HPC workloads requiring unmatched computational performance.

Additional information

| Weight | 1,8 kg |

|---|---|

| Dimensions | 26,7 × 11,1 cm |

| Country of manufacture | Taiwan |

| Manufacturer's warranty (years) | 1 |

| Model | NVIDIA H100 |

| Cache L2 (MB) | 50 |

| Process technology (nm) | 4 |

| Memory type | HBM3 |

| Graphics Processing Unit (Chip) | |

| Number of CUDA cores | 16896 |

| Number of Tensor cores | 432 |

| GPU Frequency (MHz) | 1590 |

| GPU Boost Frequency (MHz) | 1980 |

| Video memory size (GB) | 80 |

| Memory frequency (MHz) | 18000 |

| Memory bus width (bits) | 5120 |

| Memory Bandwidth (GB/s) | 3350 |

| Connection interface (PCIe) | PCIe 5.0 x16 |

| FP16 performance (TFLOPS) | 1979 |

| FP32 performance (TFLOPS) | 989 |

| FP64 performance (TFLOPS) | 49 |

| Cooling type | Passive (server module) |

| Number of occupied slots (pcs) | 8 |

| Length (cm) | 26.7 |

| Width (cm) | 11.1 |

| Weight (kg) | 1.8 |

| Temperature range (°C) | 0–85 |

| NVLink Throughput (GB/s) | 900 |

| Multi-GPU support | Yes, via NVLink |

| Virtualization/MIG support | MIG (up to 7 instances) |

Product reviews

Only logged in customers who have purchased this product may leave a review.

Reviews

There are no reviews yet.